Compare commits

58 Commits

photometry

...

master

| Author | SHA1 | Date | |

|---|---|---|---|

| 43ac48bf56 | |||

| e9d801bdc1 | |||

| 4a5bdc735c | |||

| 9253fcab5f | |||

| 69d385f257 | |||

| 62cd89fd0d | |||

| a8090ca58a | |||

| ed2ba61828 | |||

| e94d4a06b5 | |||

| 0ef71b9220 | |||

| 5a80f97b68 | |||

| 876fa7617c | |||

| 14825c344e | |||

| 02156bd89a | |||

| 1380ec426f | |||

| a7c296ca3d | |||

| 1a07ba3d3c | |||

| 3873c978dd | |||

| 6ee756941c | |||

| 6bcc72f826 | |||

| 737c23f32a | |||

| 58fe991647 | |||

| d013a1b080 | |||

| 7b7e966217 | |||

| 53e2a5d524 | |||

| 3ae7bdb13a | |||

| 715ca6a6b4 | |||

| 9f528c1ea1 | |||

| bfb2a551a7 | |||

| af1820a238 | |||

| 655416a490 | |||

| 010db87e4b | |||

| 5451c2d097 | |||

| b3e86b3e64 | |||

| ebc73c0c5e | |||

| 273bbf8a94 | |||

| 1d6100ed13 | |||

| 6f7f8b7892 | |||

| c565033d7f | |||

| bcf948bcc1 | |||

| 02b9e0bc78 | |||

| c79fc173b3 | |||

| ecab936c62 | |||

| 2d045660c2 | |||

| dcbc69a1c4 | |||

| 1b29047451 | |||

| 07f4927128 | |||

| acca4ab018 | |||

| 44fffa19a2 | |||

| 9b4bf12846 | |||

| 5f6bcd1784 | |||

| 2a16fb2fc5 | |||

| 5d36a123a7 | |||

| 0d544c5361 | |||

| d9899227c2 | |||

| e788854fe8 | |||

| 4842c175a5 | |||

| 9ded72a366 |

16

.vscode/c_cpp_properties.json

vendored

Normal file

16

.vscode/c_cpp_properties.json

vendored

Normal file

@ -0,0 +1,16 @@

|

||||

{

|

||||

"configurations": [

|

||||

{

|

||||

"name": "Linux",

|

||||

"includePath": [

|

||||

"${workspaceFolder}/**"

|

||||

],

|

||||

"defines": [],

|

||||

"compilerPath": "/usr/bin/gcc",

|

||||

"cStandard": "c11",

|

||||

"cppStandard": "c++14",

|

||||

"intelliSenseMode": "clang-x64"

|

||||

}

|

||||

],

|

||||

"version": 4

|

||||

}

|

||||

BIN

.vscode/ipch/778a17e566a4909e/mmap_address.bin

vendored

Normal file

BIN

.vscode/ipch/778a17e566a4909e/mmap_address.bin

vendored

Normal file

Binary file not shown.

BIN

.vscode/ipch/ccf983af1f87ec2b/mmap_address.bin

vendored

Normal file

BIN

.vscode/ipch/ccf983af1f87ec2b/mmap_address.bin

vendored

Normal file

Binary file not shown.

BIN

.vscode/ipch/e40aedd19a224f8d/mmap_address.bin

vendored

Normal file

BIN

.vscode/ipch/e40aedd19a224f8d/mmap_address.bin

vendored

Normal file

Binary file not shown.

6

.vscode/settings.json

vendored

Normal file

6

.vscode/settings.json

vendored

Normal file

@ -0,0 +1,6 @@

|

||||

{

|

||||

"files.associations": {

|

||||

"core": "cpp",

|

||||

"sparsecore": "cpp"

|

||||

}

|

||||

}

|

||||

30

README.md

30

README.md

@ -1,12 +1,22 @@

|

||||

# MSCKF\_VIO

|

||||

|

||||

|

||||

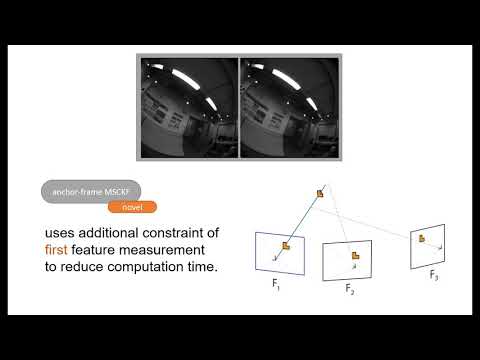

The `MSCKF_VIO` package is a stereo version of MSCKF. The software takes in synchronized stereo images and IMU messages and generates real-time 6DOF pose estimation of the IMU frame.

|

||||

The `MSCKF_VIO` package is a stereo-photometric version of MSCKF. The software takes in synchronized stereo images and IMU messages and generates real-time 6DOF pose estimation of the IMU frame.

|

||||

|

||||

The software is tested on Ubuntu 16.04 with ROS Kinetic.

|

||||

This approach is based on the paper written by Ke Sun et al.

|

||||

[https://arxiv.org/abs/1712.00036](https://arxiv.org/abs/1712.00036) and their Stereo MSCKF implementation, which tightly fuse the matched feature information of a stereo image pair into a 6DOF Pose.

|

||||

The approach implemented in this repository follows the semi-dense msckf approach tightly fusing the photometric

|

||||

information around the matched featues into the covariance matrix, as described and derived in the master thesis [Pose Estimation using a Stereo-Photometric Multi-State Constraint Kalman Filter](http://raphael.maenle.net/resources/sp-msckf/maenle_master_thesis.pdf).

|

||||

|

||||

It's positioning is comparable to the approach from Ke Sun et al. with the photometric approach, with a higher

|

||||

computational load, especially with larger image patches around the feature. A video explaining the approach can be

|

||||

found on [https://youtu.be/HrqQywAnenQ](https://youtu.be/HrqQywAnenQ):

|

||||

<br/>

|

||||

[](https://www.youtube.com/watch?v=HrqQywAnenQ)

|

||||

|

||||

<br/>

|

||||

This software should be deployed using ROS Kinetic on Ubuntu 16.04 or 18.04.

|

||||

|

||||

Video: [https://www.youtube.com/watch?v=jxfJFgzmNSw&t](https://www.youtube.com/watch?v=jxfJFgzmNSw&t=3s)<br/>

|

||||

Paper Draft: [https://arxiv.org/abs/1712.00036](https://arxiv.org/abs/1712.00036)

|

||||

|

||||

## License

|

||||

|

||||

@ -28,16 +38,6 @@ cd your_work_space

|

||||

catkin_make --pkg msckf_vio --cmake-args -DCMAKE_BUILD_TYPE=Release

|

||||

```

|

||||

|

||||

## Calibration

|

||||

|

||||

An accurate calibration is crucial for successfully running the software. To get the best performance of the software, the stereo cameras and IMU should be hardware synchronized. Note that for the stereo calibration, which includes the camera intrinsics, distortion, and extrinsics between the two cameras, you have to use a calibration software. **Manually setting these parameters will not be accurate enough.** [Kalibr](https://github.com/ethz-asl/kalibr) can be used for the stereo calibration and also to get the transformation between the stereo cameras and IMU. The yaml file generated by Kalibr can be directly used in this software. See calibration files in the `config` folder for details. The two calibration files in the `config` folder should work directly with the EuRoC and [fast flight](https://github.com/KumarRobotics/msckf_vio/wiki) datasets. The convention of the calibration file is as follows:

|

||||

|

||||

`camx/T_cam_imu`: takes a vector from the IMU frame to the camx frame.

|

||||

`cam1/T_cn_cnm1`: takes a vector from the cam0 frame to the cam1 frame.

|

||||

|

||||

The filter uses the first 200 IMU messages to initialize the gyro bias, acc bias, and initial orientation. Therefore, the robot is required to start from a stationary state in order to initialize the VIO successfully.

|

||||

|

||||

|

||||

## EuRoC and UPenn Fast flight dataset example usage

|

||||

|

||||

First obtain either the [EuRoC](https://projects.asl.ethz.ch/datasets/doku.php?id=kmavvisualinertialdatasets) or the [UPenn fast flight](https://github.com/KumarRobotics/msckf_vio/wiki/Dataset) dataset.

|

||||

@ -75,6 +75,8 @@ To visualize the pose and feature estimates you can use the provided rviz config

|

||||

|

||||

## ROS Nodes

|

||||

|

||||

The general structure is similar to the structure of the MSCKF implementation this repository is derived from.

|

||||

|

||||

### `image_processor` node

|

||||

|

||||

**Subscribed Topics**

|

||||

|

||||

@ -9,7 +9,7 @@ cam0:

|

||||

0, 0, 0, 1.000000000000000]

|

||||

camera_model: pinhole

|

||||

distortion_coeffs: [-0.28340811, 0.07395907, 0.00019359, 1.76187114e-05]

|

||||

distortion_model: radtan

|

||||

distortion_model: pre-radtan

|

||||

intrinsics: [458.654, 457.296, 367.215, 248.375]

|

||||

resolution: [752, 480]

|

||||

timeshift_cam_imu: 0.0

|

||||

@ -26,7 +26,7 @@ cam1:

|

||||

0, 0, 0, 1.000000000000000]

|

||||

camera_model: pinhole

|

||||

distortion_coeffs: [-0.28368365, 0.07451284, -0.00010473, -3.55590700e-05]

|

||||

distortion_model: radtan

|

||||

distortion_model: pre-radtan

|

||||

intrinsics: [457.587, 456.134, 379.999, 255.238]

|

||||

resolution: [752, 480]

|

||||

timeshift_cam_imu: 0.0

|

||||

|

||||

36

config/camchain-imucam-tum-scaled.yaml

Normal file

36

config/camchain-imucam-tum-scaled.yaml

Normal file

@ -0,0 +1,36 @@

|

||||

cam0:

|

||||

T_cam_imu:

|

||||

[-0.9995378259923383, 0.02917807204183088, -0.008530798463872679, 0.047094247958417004,

|

||||

0.007526588843243184, -0.03435493139706542, -0.9993813532126198, -0.04788273017221637,

|

||||

-0.029453096117288798, -0.9989836729399656, 0.034119442089241274, -0.0697294754693238,

|

||||

0.0, 0.0, 0.0, 1.0]

|

||||

camera_model: pinhole

|

||||

distortion_coeffs: [0.0034823894022493434, 0.0007150348452162257, -0.0020532361418706202,

|

||||

0.00020293673591811182]

|

||||

distortion_model: pre-equidistant

|

||||

intrinsics: [190.97847715128717, 190.9733070521226, 254.93170605935475, 256.8974428996504]

|

||||

resolution: [512, 512]

|

||||

rostopic: /cam0/image_raw

|

||||

cam1:

|

||||

T_cam_imu:

|

||||

[-0.9995240747493029, 0.02986739485347808, -0.007717688852024281, -0.05374086123613335,

|

||||

0.008095979457928231, 0.01256553460985914, -0.9998882749870535, -0.04648588412432889,

|

||||

-0.02976708103202316, -0.9994748851595197, -0.0128013601698453, -0.07333210787623645,

|

||||

0.0, 0.0, 0.0, 1.0]

|

||||

T_cn_cnm1:

|

||||

[0.9999994317488622, -0.0008361847221513937, -0.0006612844045898121, -0.10092123225528335,

|

||||

0.0008042457277382264, 0.9988989443471681, -0.04690684567228134, -0.001964540595211977,

|

||||

0.0006997790813734836, 0.04690628718225568, 0.9988990492196964, -0.0014663556043866572,

|

||||

0.0, 0.0, 0.0, 1.0]

|

||||

camera_model: pinhole

|

||||

distortion_coeffs: [0.0034003170790442797, 0.001766278153469831, -0.00266312569781606,

|

||||

0.0003299517423931039]

|

||||

distortion_model: pre-equidistant

|

||||

intrinsics: [190.44236969414825, 190.4344384721956, 252.59949716835982, 254.91723064636983]

|

||||

resolution: [512, 512]

|

||||

rostopic: /cam1/image_raw

|

||||

T_imu_body:

|

||||

[1.0000, 0.0000, 0.0000, 0.0000,

|

||||

0.0000, 1.0000, 0.0000, 0.0000,

|

||||

0.0000, 0.0000, 1.0000, 0.0000,

|

||||

0.0000, 0.0000, 0.0000, 1.0000]

|

||||

@ -18,6 +18,8 @@ namespace msckf_vio {

|

||||

|

||||

struct Frame{

|

||||

cv::Mat image;

|

||||

cv::Mat dximage;

|

||||

cv::Mat dyimage;

|

||||

double exposureTime_ms;

|

||||

};

|

||||

|

||||

@ -39,6 +41,7 @@ struct CameraCalibration{

|

||||

cv::Vec4d distortion_coeffs;

|

||||

movingWindow moving_window;

|

||||

cv::Mat featureVisu;

|

||||

int id;

|

||||

};

|

||||

|

||||

|

||||

|

||||

@ -70,6 +70,11 @@ struct Feature {

|

||||

position(Eigen::Vector3d::Zero()),

|

||||

is_initialized(false), is_anchored(false) {}

|

||||

|

||||

|

||||

void Rhocost(const Eigen::Isometry3d& T_c0_ci,

|

||||

const double x, const Eigen::Vector2d& z1, const Eigen::Vector2d& z2,

|

||||

double& e) const;

|

||||

|

||||

/*

|

||||

* @brief cost Compute the cost of the camera observations

|

||||

* @param T_c0_c1 A rigid body transformation takes

|

||||

@ -82,6 +87,13 @@ struct Feature {

|

||||

const Eigen::Vector3d& x, const Eigen::Vector2d& z,

|

||||

double& e) const;

|

||||

|

||||

bool initializeRho(const CamStateServer& cam_states);

|

||||

|

||||

inline void RhoJacobian(const Eigen::Isometry3d& T_c0_ci,

|

||||

const double x, const Eigen::Vector2d& z1, const Eigen::Vector2d& z2,

|

||||

Eigen::Matrix<double, 2, 1>& J, Eigen::Vector2d& r,

|

||||

double& w) const;

|

||||

|

||||

/*

|

||||

* @brief jacobian Compute the Jacobian of the camera observation

|

||||

* @param T_c0_c1 A rigid body transformation takes

|

||||

@ -97,6 +109,10 @@ struct Feature {

|

||||

Eigen::Matrix<double, 2, 3>& J, Eigen::Vector2d& r,

|

||||

double& w) const;

|

||||

|

||||

inline double generateInitialDepth(

|

||||

const Eigen::Isometry3d& T_c1_c2, const Eigen::Vector2d& z1,

|

||||

const Eigen::Vector2d& z2) const;

|

||||

|

||||

/*

|

||||

* @brief generateInitialGuess Compute the initial guess of

|

||||

* the feature's 3d position using only two views.

|

||||

@ -121,6 +137,7 @@ struct Feature {

|

||||

inline bool checkMotion(

|

||||

const CamStateServer& cam_states) const;

|

||||

|

||||

|

||||

/*

|

||||

* @brief InitializeAnchor generates the NxN patch around the

|

||||

* feature in the Anchor image

|

||||

@ -148,6 +165,13 @@ struct Feature {

|

||||

inline bool initializePosition(

|

||||

const CamStateServer& cam_states);

|

||||

|

||||

cv::Point2f pixelDistanceAt(

|

||||

const CAMState& cam_state,

|

||||

const StateIDType& cam_state_id,

|

||||

const CameraCalibration& cam,

|

||||

Eigen::Vector3d& in_p) const;

|

||||

|

||||

|

||||

/*

|

||||

* @brief project PositionToCamera Takes a 3d position in a world frame

|

||||

* and projects it into the passed camera frame using pinhole projection

|

||||

@ -160,6 +184,21 @@ struct Feature {

|

||||

const CameraCalibration& cam,

|

||||

Eigen::Vector3d& in_p) const;

|

||||

|

||||

double CompleteCvKernel(

|

||||

const cv::Point2f pose,

|

||||

const StateIDType& cam_state_id,

|

||||

CameraCalibration& cam,

|

||||

std::string type) const;

|

||||

|

||||

double cvKernel(

|

||||

const cv::Point2f pose,

|

||||

std::string type) const;

|

||||

|

||||

double Kernel(

|

||||

const cv::Point2f pose,

|

||||

const cv::Mat frame,

|

||||

std::string type) const;

|

||||

|

||||

/*

|

||||

* @brief IrradianceAnchorPatch_Camera returns irradiance values

|

||||

* of the Anchor Patch position in a camera frame

|

||||

@ -169,7 +208,7 @@ struct Feature {

|

||||

bool estimate_FrameIrradiance(

|

||||

const CAMState& cam_state,

|

||||

const StateIDType& cam_state_id,

|

||||

CameraCalibration& cam0,

|

||||

CameraCalibration& cam,

|

||||

std::vector<double>& anchorPatch_estimate,

|

||||

IlluminationParameter& estimatedIllumination) const;

|

||||

|

||||

@ -177,14 +216,17 @@ bool MarkerGeneration(

|

||||

ros::Publisher& marker_pub,

|

||||

const CamStateServer& cam_states) const;

|

||||

|

||||

bool VisualizeKernel(

|

||||

const CAMState& cam_state,

|

||||

const StateIDType& cam_state_id,

|

||||

CameraCalibration& cam0) const;

|

||||

|

||||

bool VisualizePatch(

|

||||

const CAMState& cam_state,

|

||||

const StateIDType& cam_state_id,

|

||||

CameraCalibration& cam0,

|

||||

const std::vector<double> photo_r,

|

||||

std::stringstream& ss,

|

||||

cv::Point2f gradientVector,

|

||||

cv::Point2f residualVector) const;

|

||||

CameraCalibration& cam,

|

||||

const Eigen::VectorXd& photo_r,

|

||||

std::stringstream& ss) const;

|

||||

/*

|

||||

* @brief AnchorPixelToPosition uses the calcualted pixels

|

||||

* of the anchor patch to generate 3D positions of all of em

|

||||

@ -235,6 +277,14 @@ inline Eigen::Vector3d AnchorPixelToPosition(cv::Point2f in_p,

|

||||

bool is_initialized;

|

||||

bool is_anchored;

|

||||

|

||||

|

||||

cv::Mat abs_xderImage;

|

||||

cv::Mat abs_yderImage;

|

||||

|

||||

cv::Mat xderImage;

|

||||

cv::Mat yderImage;

|

||||

|

||||

cv::Mat anchorImage_blurred;

|

||||

cv::Point2f anchor_center_pos;

|

||||

cv::Point2f undist_anchor_center_pos;

|

||||

// Noise for a normalized feature measurement.

|

||||

@ -250,6 +300,26 @@ typedef std::map<FeatureIDType, Feature, std::less<int>,

|

||||

Eigen::aligned_allocator<

|

||||

std::pair<const FeatureIDType, Feature> > > MapServer;

|

||||

|

||||

void Feature::Rhocost(const Eigen::Isometry3d& T_c0_ci,

|

||||

const double x, const Eigen::Vector2d& z1, const Eigen::Vector2d& z2,

|

||||

double& e) const

|

||||

{

|

||||

// Compute hi1, hi2, and hi3 as Equation (37).

|

||||

const double& rho = x;

|

||||

|

||||

Eigen::Vector3d h = T_c0_ci.linear()*

|

||||

Eigen::Vector3d(z1(0), z1(1), 1.0) + rho*T_c0_ci.translation();

|

||||

double& h1 = h(0);

|

||||

double& h2 = h(1);

|

||||

double& h3 = h(2);

|

||||

|

||||

// Predict the feature observation in ci frame.

|

||||

Eigen::Vector2d z_hat(h1/h3, h2/h3);

|

||||

|

||||

// Compute the residual.

|

||||

e = (z_hat-z2).squaredNorm();

|

||||

return;

|

||||

}

|

||||

|

||||

void Feature::cost(const Eigen::Isometry3d& T_c0_ci,

|

||||

const Eigen::Vector3d& x, const Eigen::Vector2d& z,

|

||||

@ -262,9 +332,9 @@ void Feature::cost(const Eigen::Isometry3d& T_c0_ci,

|

||||

|

||||

Eigen::Vector3d h = T_c0_ci.linear()*

|

||||

Eigen::Vector3d(alpha, beta, 1.0) + rho*T_c0_ci.translation();

|

||||

double& h1 = h(0);

|

||||

double& h2 = h(1);

|

||||

double& h3 = h(2);

|

||||

double h1 = h(0);

|

||||

double h2 = h(1);

|

||||

double h3 = h(2);

|

||||

|

||||

// Predict the feature observation in ci frame.

|

||||

Eigen::Vector2d z_hat(h1/h3, h2/h3);

|

||||

@ -274,6 +344,42 @@ void Feature::cost(const Eigen::Isometry3d& T_c0_ci,

|

||||

return;

|

||||

}

|

||||

|

||||

void Feature::RhoJacobian(const Eigen::Isometry3d& T_c0_ci,

|

||||

const double x, const Eigen::Vector2d& z1, const Eigen::Vector2d& z2,

|

||||

Eigen::Matrix<double, 2, 1>& J, Eigen::Vector2d& r,

|

||||

double& w) const

|

||||

{

|

||||

|

||||

const double& rho = x;

|

||||

|

||||

Eigen::Vector3d h = T_c0_ci.linear()*

|

||||

Eigen::Vector3d(z1(0), z2(1), 1.0) + rho*T_c0_ci.translation();

|

||||

double& h1 = h(0);

|

||||

double& h2 = h(1);

|

||||

double& h3 = h(2);

|

||||

|

||||

// Compute the Jacobian.

|

||||

Eigen::Matrix3d W;

|

||||

W.leftCols<2>() = T_c0_ci.linear().leftCols<2>();

|

||||

W.rightCols<1>() = T_c0_ci.translation();

|

||||

|

||||

J(0,0) = -h1/(h3*h3);

|

||||

J(1,0) = -h2/(h3*h3);

|

||||

|

||||

// Compute the residual.

|

||||

Eigen::Vector2d z_hat(h1/h3, h2/h3);

|

||||

r = z_hat - z2;

|

||||

|

||||

// Compute the weight based on the residual.

|

||||

double e = r.norm();

|

||||

if (e <= optimization_config.huber_epsilon)

|

||||

w = 1.0;

|

||||

else

|

||||

w = optimization_config.huber_epsilon / (2*e);

|

||||

|

||||

return;

|

||||

}

|

||||

|

||||

void Feature::jacobian(const Eigen::Isometry3d& T_c0_ci,

|

||||

const Eigen::Vector3d& x, const Eigen::Vector2d& z,

|

||||

Eigen::Matrix<double, 2, 3>& J, Eigen::Vector2d& r,

|

||||

@ -313,9 +419,9 @@ void Feature::jacobian(const Eigen::Isometry3d& T_c0_ci,

|

||||

return;

|

||||

}

|

||||

|

||||

void Feature::generateInitialGuess(

|

||||

double Feature::generateInitialDepth(

|

||||

const Eigen::Isometry3d& T_c1_c2, const Eigen::Vector2d& z1,

|

||||

const Eigen::Vector2d& z2, Eigen::Vector3d& p) const

|

||||

const Eigen::Vector2d& z2) const

|

||||

{

|

||||

// Construct a least square problem to solve the depth.

|

||||

Eigen::Vector3d m = T_c1_c2.linear() * Eigen::Vector3d(z1(0), z1(1), 1.0);

|

||||

@ -330,6 +436,15 @@ void Feature::generateInitialGuess(

|

||||

|

||||

// Solve for the depth.

|

||||

double depth = (A.transpose() * A).inverse() * A.transpose() * b;

|

||||

return depth;

|

||||

}

|

||||

|

||||

|

||||

void Feature::generateInitialGuess(

|

||||

const Eigen::Isometry3d& T_c1_c2, const Eigen::Vector2d& z1,

|

||||

const Eigen::Vector2d& z2, Eigen::Vector3d& p) const

|

||||

{

|

||||

double depth = generateInitialDepth(T_c1_c2, z1, z2);

|

||||

p(0) = z1(0) * depth;

|

||||

p(1) = z1(1) * depth;

|

||||

p(2) = depth;

|

||||

@ -379,10 +494,62 @@ bool Feature::checkMotion(const CamStateServer& cam_states) const

|

||||

else return false;

|

||||

}

|

||||

|

||||

double Feature::CompleteCvKernel(

|

||||

const cv::Point2f pose,

|

||||

const StateIDType& cam_state_id,

|

||||

CameraCalibration& cam,

|

||||

std::string type) const

|

||||

{

|

||||

|

||||

double delta = 0;

|

||||

|

||||

if(type == "Sobel_x")

|

||||

delta = ((double)cam.moving_window.find(cam_state_id)->second.dximage.at<short>(pose.y, pose.x))/255.;

|

||||

else if (type == "Sobel_y")

|

||||

delta = ((double)cam.moving_window.find(cam_state_id)->second.dyimage.at<short>(pose.y, pose.x))/255.;

|

||||

|

||||

return delta;

|

||||

}

|

||||

|

||||

double Feature::cvKernel(

|

||||

const cv::Point2f pose,

|

||||

std::string type) const

|

||||

{

|

||||

|

||||

double delta = 0;

|

||||

|

||||

if(type == "Sobel_x")

|

||||

delta = ((double)xderImage.at<short>(pose.y, pose.x))/255.;

|

||||

else if (type == "Sobel_y")

|

||||

delta = ((double)yderImage.at<short>(pose.y, pose.x))/255.;

|

||||

return delta;

|

||||

|

||||

}

|

||||

|

||||

double Feature::Kernel(

|

||||

const cv::Point2f pose,

|

||||

const cv::Mat frame,

|

||||

std::string type) const

|

||||

{

|

||||

Eigen::Matrix<double, 3, 3> kernel = Eigen::Matrix<double, 3, 3>::Zero();

|

||||

if(type == "Sobel_x")

|

||||

kernel << -1., 0., 1.,-2., 0., 2. , -1., 0., 1.;

|

||||

else if(type == "Sobel_y")

|

||||

kernel << -1., -2., -1., 0., 0., 0., 1., 2., 1.;

|

||||

|

||||

double delta = 0;

|

||||

int offs = (int)(kernel.rows()-1)/2;

|

||||

|

||||

for(int i = 0; i < kernel.rows(); i++)

|

||||

for(int j = 0; j < kernel.cols(); j++)

|

||||

delta += ((float)frame.at<uint8_t>(pose.y+j-offs , pose.x+i-offs))/255. * (float)kernel(j,i);

|

||||

|

||||

return delta;

|

||||

}

|

||||

bool Feature::estimate_FrameIrradiance(

|

||||

const CAMState& cam_state,

|

||||

const StateIDType& cam_state_id,

|

||||

CameraCalibration& cam0,

|

||||

CameraCalibration& cam,

|

||||

std::vector<double>& anchorPatch_estimate,

|

||||

IlluminationParameter& estimated_illumination) const

|

||||

{

|

||||

@ -391,11 +558,11 @@ bool Feature::estimate_FrameIrradiance(

|

||||

// muliply by a and add b of this frame

|

||||

|

||||

auto anchor = observations.begin();

|

||||

if(cam0.moving_window.find(anchor->first) == cam0.moving_window.end())

|

||||

if(cam.moving_window.find(anchor->first) == cam.moving_window.end())

|

||||

return false;

|

||||

|

||||

double anchorExposureTime_ms = cam0.moving_window.find(anchor->first)->second.exposureTime_ms;

|

||||

double frameExposureTime_ms = cam0.moving_window.find(cam_state_id)->second.exposureTime_ms;

|

||||

double anchorExposureTime_ms = cam.moving_window.find(anchor->first)->second.exposureTime_ms;

|

||||

double frameExposureTime_ms = cam.moving_window.find(cam_state_id)->second.exposureTime_ms;

|

||||

|

||||

|

||||

double a_A = anchorExposureTime_ms;

|

||||

@ -530,21 +697,55 @@ bool Feature::MarkerGeneration(

|

||||

marker_pub.publish(ma);

|

||||

}

|

||||

|

||||

|

||||

bool Feature::VisualizeKernel(

|

||||

const CAMState& cam_state,

|

||||

const StateIDType& cam_state_id,

|

||||

CameraCalibration& cam0) const

|

||||

{

|

||||

auto anchor = observations.begin();

|

||||

cv::Mat anchorImage = cam0.moving_window.find(anchor->first)->second.image;

|

||||

|

||||

//cv::Mat xderImage;

|

||||

//cv::Mat yderImage;

|

||||

|

||||

//cv::Sobel(anchorImage, xderImage, CV_8UC1, 1, 0, 3);

|

||||

//cv::Sobel(anchorImage, yderImage, CV_8UC1, 0, 1, 3);

|

||||

|

||||

|

||||

// cv::Mat xderImage2(anchorImage.rows, anchorImage.cols, anchorImage_blurred.type());

|

||||

// cv::Mat yderImage2(anchorImage.rows, anchorImage.cols, anchorImage_blurred.type());

|

||||

|

||||

/*

|

||||

for(int i = 1; i < anchorImage.rows-1; i++)

|

||||

for(int j = 1; j < anchorImage.cols-1; j++)

|

||||

xderImage2.at<uint8_t>(j,i) = 255.*fabs(Kernel(cv::Point2f(i,j), anchorImage_blurred, "Sobel_x"));

|

||||

|

||||

|

||||

for(int i = 1; i < anchorImage.rows-1; i++)

|

||||

for(int j = 1; j < anchorImage.cols-1; j++)

|

||||

yderImage2.at<uint8_t>(j,i) = 255.*fabs(Kernel(cv::Point2f(i,j), anchorImage_blurred, "Sobel_y"));

|

||||

*/

|

||||

//cv::imshow("anchor", anchorImage);

|

||||

cv::imshow("xder2", xderImage);

|

||||

cv::imshow("yder2", yderImage);

|

||||

|

||||

cvWaitKey(0);

|

||||

}

|

||||

|

||||

bool Feature::VisualizePatch(

|

||||

const CAMState& cam_state,

|

||||

const StateIDType& cam_state_id,

|

||||

CameraCalibration& cam0,

|

||||

const std::vector<double> photo_r,

|

||||

std::stringstream& ss,

|

||||

cv::Point2f gradientVector,

|

||||

cv::Point2f residualVector) const

|

||||

CameraCalibration& cam,

|

||||

const Eigen::VectorXd& photo_r,

|

||||

std::stringstream& ss) const

|

||||

{

|

||||

|

||||

double rescale = 1;

|

||||

|

||||

//visu - anchor

|

||||

auto anchor = observations.begin();

|

||||

cv::Mat anchorImage = cam0.moving_window.find(anchor->first)->second.image;

|

||||

cv::Mat anchorImage = cam.moving_window.find(anchor->first)->second.image;

|

||||

cv::Mat dottedFrame(anchorImage.size(), CV_8UC3);

|

||||

cv::cvtColor(anchorImage, dottedFrame, CV_GRAY2RGB);

|

||||

|

||||

@ -556,10 +757,10 @@ bool Feature::VisualizePatch(

|

||||

cv::Point ys(point.x, point.y);

|

||||

cv::rectangle(dottedFrame, xs, ys, cv::Scalar(0,255,255));

|

||||

}

|

||||

cam0.featureVisu = dottedFrame.clone();

|

||||

cam.featureVisu = dottedFrame.clone();

|

||||

|

||||

// visu - feature

|

||||

cv::Mat current_image = cam0.moving_window.find(cam_state_id)->second.image;

|

||||

cv::Mat current_image = cam.moving_window.find(cam_state_id)->second.image;

|

||||

cv::cvtColor(current_image, dottedFrame, CV_GRAY2RGB);

|

||||

|

||||

// set position in frame

|

||||

@ -567,7 +768,7 @@ bool Feature::VisualizePatch(

|

||||

std::vector<double> projectionPatch;

|

||||

for(auto point : anchorPatch_3d)

|

||||

{

|

||||

cv::Point2f p_in_c0 = projectPositionToCamera(cam_state, cam_state_id, cam0, point);

|

||||

cv::Point2f p_in_c0 = projectPositionToCamera(cam_state, cam_state_id, cam, point);

|

||||

projectionPatch.push_back(PixelIrradiance(p_in_c0, current_image));

|

||||

// visu - feature

|

||||

cv::Point xs(p_in_c0.x, p_in_c0.y);

|

||||

@ -575,7 +776,7 @@ bool Feature::VisualizePatch(

|

||||

cv::rectangle(dottedFrame, xs, ys, cv::Scalar(0,255,0));

|

||||

}

|

||||

|

||||

cv::hconcat(cam0.featureVisu, dottedFrame, cam0.featureVisu);

|

||||

cv::hconcat(cam.featureVisu, dottedFrame, cam.featureVisu);

|

||||

|

||||

|

||||

// patches visualization

|

||||

@ -622,10 +823,14 @@ bool Feature::VisualizePatch(

|

||||

cv::putText(irradianceFrame, namer.str() , cvPoint(30, 65+scale*2*N),

|

||||

cv::FONT_HERSHEY_COMPLEX_SMALL, 0.8, cvScalar(0,0,0), 1, CV_AA);

|

||||

|

||||

cv::Point2f p_f(observations.find(cam_state_id)->second(0),observations.find(cam_state_id)->second(1));

|

||||

cv::Point2f p_f;

|

||||

if(cam.id == 0)

|

||||

p_f = cv::Point2f(observations.find(cam_state_id)->second(0),observations.find(cam_state_id)->second(1));

|

||||

else if(cam.id == 1)

|

||||

p_f = cv::Point2f(observations.find(cam_state_id)->second(2),observations.find(cam_state_id)->second(3));

|

||||

// move to real pixels

|

||||

p_f = image_handler::distortPoint(p_f, cam0.intrinsics, cam0.distortion_model, cam0.distortion_coeffs);

|

||||

|

||||

p_f = image_handler::distortPoint(p_f, cam.intrinsics, cam.distortion_model, cam.distortion_coeffs);

|

||||

for(int i = 0; i<N; i++)

|

||||

{

|

||||

for(int j = 0; j<N ; j++)

|

||||

@ -642,22 +847,23 @@ bool Feature::VisualizePatch(

|

||||

// residual grid projection, positive - red, negative - blue colored

|

||||

namer.str(std::string());

|

||||

namer << "residual";

|

||||

std::cout << "-- photo_r -- \n" << photo_r << " -- " << std::endl;

|

||||

cv::putText(irradianceFrame, namer.str() , cvPoint(30+scale*N, scale*N/2-5),

|

||||

cv::FONT_HERSHEY_COMPLEX_SMALL, 0.8, cvScalar(0,0,0), 1, CV_AA);

|

||||

|

||||

for(int i = 0; i<N; i++)

|

||||

for(int j = 0; j<N; j++)

|

||||

if(photo_r[i*N+j]>0)

|

||||

if(photo_r(2*(i*N+j))>0)

|

||||

cv::rectangle(irradianceFrame,

|

||||

cv::Point(40+scale*(N+i+1), 15+scale*(N/2+j)),

|

||||

cv::Point(40+scale*(N+i), 15+scale*(N/2+j+1)),

|

||||

cv::Scalar(255 - photo_r[i*N+j]*255, 255 - photo_r[i*N+j]*255, 255),

|

||||

cv::Scalar(255 - photo_r(2*(i*N+j)+1)*255, 255 - photo_r(2*(i*N+j)+1)*255, 255),

|

||||

CV_FILLED);

|

||||

else

|

||||

cv::rectangle(irradianceFrame,

|

||||

cv::Point(40+scale*(N+i+1), 15+scale*(N/2+j)),

|

||||

cv::Point(40+scale*(N+i), 15+scale*(N/2+j+1)),

|

||||

cv::Scalar(255, 255 + photo_r[i*N+j]*255, 255 + photo_r[i*N+j]*255),

|

||||

cv::Scalar(255, 255 + photo_r(2*(i*N+j)+1)*255, 255 + photo_r(2*(i*N+j)+1)*255),

|

||||

CV_FILLED);

|

||||

|

||||

// gradient arrow

|

||||

@ -670,14 +876,15 @@ bool Feature::VisualizePatch(

|

||||

*/

|

||||

|

||||

// residual gradient direction

|

||||

/*

|

||||

cv::arrowedLine(irradianceFrame,

|

||||

cv::Point(40+scale*(N+N/2+0.5), 15+scale*((N-0.5))),

|

||||

cv::Point(40+scale*(N+N/2+0.5)+scale*residualVector.x, 15+scale*(N-0.5)+scale*residualVector.y),

|

||||

cv::Scalar(0, 255, 175),

|

||||

3);

|

||||

|

||||

*/

|

||||

|

||||

cv::hconcat(cam0.featureVisu, irradianceFrame, cam0.featureVisu);

|

||||

cv::hconcat(cam.featureVisu, irradianceFrame, cam.featureVisu);

|

||||

|

||||

/*

|

||||

// visualize position of used observations and resulting feature position

|

||||

@ -709,15 +916,15 @@ bool Feature::VisualizePatch(

|

||||

|

||||

// draw, x y position and arrow with direction - write z next to it

|

||||

|

||||

cv::resize(cam0.featureVisu, cam0.featureVisu, cv::Size(), rescale, rescale);

|

||||

cv::resize(cam.featureVisu, cam.featureVisu, cv::Size(), rescale, rescale);

|

||||

|

||||

|

||||

cv::hconcat(cam0.featureVisu, positionFrame, cam0.featureVisu);

|

||||

cv::hconcat(cam.featureVisu, positionFrame, cam.featureVisu);

|

||||

*/

|

||||

// write feature position

|

||||

std::stringstream pos_s;

|

||||

pos_s << "u: " << observations.begin()->second(0) << " v: " << observations.begin()->second(1);

|

||||

cv::putText(cam0.featureVisu, ss.str() , cvPoint(anchorImage.rows + 100, anchorImage.cols - 30),

|

||||

cv::putText(cam.featureVisu, ss.str() , cvPoint(anchorImage.rows + 100, anchorImage.cols - 30),

|

||||

cv::FONT_HERSHEY_COMPLEX_SMALL, 0.8, cvScalar(200,200,250), 1, CV_AA);

|

||||

// create line?

|

||||

|

||||

@ -725,16 +932,51 @@ bool Feature::VisualizePatch(

|

||||

|

||||

std::stringstream loc;

|

||||

// loc << "/home/raphael/dev/MSCKF_ws/img/feature_" << std::to_string(ros::Time::now().toSec()) << ".jpg";

|

||||

//cv::imwrite(loc.str(), cam0.featureVisu);

|

||||

//cv::imwrite(loc.str(), cam.featureVisu);

|

||||

|

||||

cv::imshow("patch", cam0.featureVisu);

|

||||

cvWaitKey(0);

|

||||

cv::imshow("patch", cam.featureVisu);

|

||||

cvWaitKey(1);

|

||||

}

|

||||

|

||||

float Feature::PixelIrradiance(cv::Point2f pose, cv::Mat image) const

|

||||

{

|

||||

|

||||

return ((float)image.at<uint8_t>(pose.y, pose.x))/255;

|

||||

return ((float)image.at<uint8_t>(pose.y, pose.x))/255.;

|

||||

}

|

||||

|

||||

cv::Point2f Feature::pixelDistanceAt(

|

||||

const CAMState& cam_state,

|

||||

const StateIDType& cam_state_id,

|

||||

const CameraCalibration& cam,

|

||||

Eigen::Vector3d& in_p) const

|

||||

{

|

||||

|

||||

cv::Point2f cam_p = projectPositionToCamera(cam_state, cam_state_id, cam, in_p);

|

||||

|

||||

// create vector of patch in pixel plane

|

||||

std::vector<cv::Point2f> surroundingPoints;

|

||||

surroundingPoints.push_back(cv::Point2f(cam_p.x+1, cam_p.y));

|

||||

surroundingPoints.push_back(cv::Point2f(cam_p.x-1, cam_p.y));

|

||||

surroundingPoints.push_back(cv::Point2f(cam_p.x, cam_p.y+1));

|

||||

surroundingPoints.push_back(cv::Point2f(cam_p.x, cam_p.y-1));

|

||||

|

||||

std::vector<cv::Point2f> pure;

|

||||

image_handler::undistortPoints(surroundingPoints,

|

||||

cam.intrinsics,

|

||||

cam.distortion_model,

|

||||

cam.distortion_coeffs,

|

||||

pure);

|

||||

|

||||

// transfrom position to camera frame

|

||||

// to get distance multiplier

|

||||

Eigen::Matrix3d R_w_c0 = quaternionToRotation(cam_state.orientation);

|

||||

const Eigen::Vector3d& t_c0_w = cam_state.position;

|

||||

Eigen::Vector3d p_c0 = R_w_c0 * (in_p-t_c0_w);

|

||||

|

||||

// returns the distance between the pixel points in space

|

||||

cv::Point2f distance(fabs(pure[0].x - pure[1].x), fabs(pure[2].y - pure[3].y));

|

||||

|

||||

return distance;

|

||||

}

|

||||

|

||||

cv::Point2f Feature::projectPositionToCamera(

|

||||

@ -746,26 +988,40 @@ cv::Point2f Feature::projectPositionToCamera(

|

||||

Eigen::Isometry3d T_c0_w;

|

||||

|

||||

cv::Point2f out_p;

|

||||

|

||||

cv::Point2f my_p;

|

||||

// transfrom position to camera frame

|

||||

|

||||

// cam0 position

|

||||

Eigen::Matrix3d R_w_c0 = quaternionToRotation(cam_state.orientation);

|

||||

const Eigen::Vector3d& t_c0_w = cam_state.position;

|

||||

Eigen::Vector3d p_c0 = R_w_c0 * (in_p-t_c0_w);

|

||||

|

||||

out_p = cv::Point2f(p_c0(0)/p_c0(2), p_c0(1)/p_c0(2));

|

||||

// project point according to model

|

||||

if(cam.id == 0)

|

||||

{

|

||||

Eigen::Vector3d p_c0 = R_w_c0 * (in_p-t_c0_w);

|

||||

out_p = cv::Point2f(p_c0(0)/p_c0(2), p_c0(1)/p_c0(2));

|

||||

|

||||

// if(cam_state_id == observations.begin()->first)

|

||||

//printf("undist:\n \tproj pos: %f, %f\n\ttrue pos: %f, %f\n", out_p.x, out_p.y, undist_anchor_center_pos.x, undist_anchor_center_pos.y);

|

||||

|

||||

cv::Point2f my_p = image_handler::distortPoint(out_p,

|

||||

cam.intrinsics,

|

||||

cam.distortion_model,

|

||||

cam.distortion_coeffs);

|

||||

}

|

||||

// if camera is one, calcualte the cam1 position from cam0 position first

|

||||

else if(cam.id == 1)

|

||||

{

|

||||

// cam1 position

|

||||

Eigen::Matrix3d R_c0_c1 = CAMState::T_cam0_cam1.linear();

|

||||

Eigen::Matrix3d R_w_c1 = R_c0_c1 * R_w_c0;

|

||||

Eigen::Vector3d t_c1_w = t_c0_w - R_w_c1.transpose()*CAMState::T_cam0_cam1.translation();

|

||||

|

||||

// printf("truPosition: %f, %f, %f\n", position.x(), position.y(), position.z());

|

||||

// printf("camPosition: %f, %f, %f\n", p_c0(0), p_c0(1), p_c0(2));

|

||||

// printf("Photo projection: %f, %f\n", my_p[0].x, my_p[0].y);

|

||||

|

||||

Eigen::Vector3d p_c1 = R_w_c1 * (in_p-t_c1_w);

|

||||

out_p = cv::Point2f(p_c1(0)/p_c1(2), p_c1(1)/p_c1(2));

|

||||

}

|

||||

|

||||

// undistort point according to camera model

|

||||

if (cam.distortion_model.substr(0,3) == "pre-")

|

||||

my_p = cv::Point2f(out_p.x * cam.intrinsics[0] + cam.intrinsics[2], out_p.y * cam.intrinsics[1] + cam.intrinsics[3]);

|

||||

else

|

||||

my_p = image_handler::distortPoint(out_p,

|

||||

cam.intrinsics,

|

||||

cam.distortion_model,

|

||||

cam.distortion_coeffs);

|

||||

return my_p;

|

||||

}

|

||||

|

||||

@ -790,61 +1046,240 @@ bool Feature::initializeAnchor(const CameraCalibration& cam, int N)

|

||||

|

||||

auto anchor = observations.begin();

|

||||

if(cam.moving_window.find(anchor->first) == cam.moving_window.end())

|

||||

{

|

||||

return false;

|

||||

|

||||

}

|

||||

cv::Mat anchorImage = cam.moving_window.find(anchor->first)->second.image;

|

||||

cv::Mat anchorImage_deeper;

|

||||

anchorImage.convertTo(anchorImage_deeper,CV_16S);

|

||||

//TODO remove this?

|

||||

|

||||

|

||||

cv::Sobel(anchorImage_deeper, xderImage, -1, 1, 0, 3);

|

||||

cv::Sobel(anchorImage_deeper, yderImage, -1, 0, 1, 3);

|

||||

|

||||

xderImage/=8.;

|

||||

yderImage/=8.;

|

||||

|

||||

cv::convertScaleAbs(xderImage, abs_xderImage);

|

||||

cv::convertScaleAbs(yderImage, abs_yderImage);

|

||||

|

||||

cv::GaussianBlur(anchorImage, anchorImage_blurred, cv::Size(3,3), 0, 0, cv::BORDER_DEFAULT);

|

||||

|

||||

auto u = anchor->second(0);//*cam.intrinsics[0] + cam.intrinsics[2];

|

||||

auto v = anchor->second(1);//*cam.intrinsics[1] + cam.intrinsics[3];

|

||||

|

||||

//testing

|

||||

undist_anchor_center_pos = cv::Point2f(u,v);

|

||||

|

||||

//for NxN patch pixels around feature

|

||||

int count = 0;

|

||||

|

||||

// get feature in undistorted pixel space

|

||||

// this only reverts from 'pure' space into undistorted pixel space using camera matrix

|

||||

cv::Point2f und_pix_p = image_handler::distortPoint(cv::Point2f(u, v),

|

||||

cam.intrinsics,

|

||||

cam.distortion_model,

|

||||

cam.distortion_coeffs);

|

||||

// create vector of patch in pixel plane

|

||||

for(double u_run = -n; u_run <= n; u_run++)

|

||||

for(double v_run = -n; v_run <= n; v_run++)

|

||||

anchorPatch_real.push_back(cv::Point2f(und_pix_p.x+u_run, und_pix_p.y+v_run));

|

||||

// check if image has been pre-undistorted

|

||||

if(cam.distortion_model.substr(0,3) == "pre")

|

||||

{

|

||||

//project onto pixel plane

|

||||

undist_anchor_center_pos = cv::Point2f(u * cam.intrinsics[0] + cam.intrinsics[2], v * cam.intrinsics[1] + cam.intrinsics[3]);

|

||||

// create vector of patch in pixel plane

|

||||

for(double u_run = -n; u_run <= n; u_run++)

|

||||

for(double v_run = -n; v_run <= n; v_run++)

|

||||

anchorPatch_real.push_back(cv::Point2f(undist_anchor_center_pos.x+u_run, undist_anchor_center_pos.y+v_run));

|

||||

|

||||

|

||||

//create undistorted pure points

|

||||

image_handler::undistortPoints(anchorPatch_real,

|

||||

cam.intrinsics,

|

||||

cam.distortion_model,

|

||||

cam.distortion_coeffs,

|

||||

anchorPatch_ideal);

|

||||

//project back into u,v

|

||||

for(int i = 0; i < N*N; i++)

|

||||

anchorPatch_ideal.push_back(cv::Point2f((anchorPatch_real[i].x-cam.intrinsics[2])/cam.intrinsics[0], (anchorPatch_real[i].y-cam.intrinsics[3])/cam.intrinsics[1]));

|

||||

}

|

||||

|

||||

else

|

||||

{

|

||||

// get feature in undistorted pixel space

|

||||

// this only reverts from 'pure' space into undistorted pixel space using camera matrix

|

||||

cv::Point2f und_pix_p = image_handler::distortPoint(cv::Point2f(u, v),

|

||||

cam.intrinsics,

|

||||

cam.distortion_model,

|

||||

cam.distortion_coeffs);

|

||||

// create vector of patch in pixel plane

|

||||

for(double u_run = -n; u_run <= n; u_run++)

|

||||

for(double v_run = -n; v_run <= n; v_run++)

|

||||

anchorPatch_real.push_back(cv::Point2f(und_pix_p.x+u_run, und_pix_p.y+v_run));

|

||||

|

||||

|

||||

//create undistorted pure points

|

||||

image_handler::undistortPoints(anchorPatch_real,

|

||||

cam.intrinsics,

|

||||

cam.distortion_model,

|

||||

cam.distortion_coeffs,

|

||||

anchorPatch_ideal);

|

||||

|

||||

}

|

||||

|

||||

// save anchor position for later visualisaztion

|

||||

anchor_center_pos = anchorPatch_real[(N*N-1)/2];

|

||||

|

||||

|

||||

// save true pixel Patch position

|

||||

for(auto point : anchorPatch_real)

|

||||

if(point.x - n < 0 || point.x + n >= cam.resolution(0) || point.y - n < 0 || point.y + n >= cam.resolution(1))

|

||||

if(point.x - n < 0 || point.x + n >= cam.resolution(0)-1 || point.y - n < 0 || point.y + n >= cam.resolution(1)-1)

|

||||

return false;

|

||||

|

||||

|

||||

for(auto point : anchorPatch_real)

|

||||

anchorPatch.push_back(PixelIrradiance(point, anchorImage));

|

||||

|

||||

|

||||

// project patch pixel to 3D space in camera coordinate system

|

||||

for(auto point : anchorPatch_ideal)

|

||||

anchorPatch_3d.push_back(AnchorPixelToPosition(point, cam));

|

||||

|

||||

is_anchored = true;

|

||||

|

||||

return true;

|

||||

}

|

||||

|

||||

|

||||

bool Feature::initializeRho(const CamStateServer& cam_states) {

|

||||

|

||||

// Organize camera poses and feature observations properly.

|

||||

std::vector<Eigen::Isometry3d,

|

||||

Eigen::aligned_allocator<Eigen::Isometry3d> > cam_poses(0);

|

||||

std::vector<Eigen::Vector2d,

|

||||

Eigen::aligned_allocator<Eigen::Vector2d> > measurements(0);

|

||||

|

||||

for (auto& m : observations) {

|

||||

auto cam_state_iter = cam_states.find(m.first);

|

||||

if (cam_state_iter == cam_states.end()) continue;

|

||||

|

||||

// Add the measurement.

|

||||

measurements.push_back(m.second.head<2>());

|

||||

measurements.push_back(m.second.tail<2>());

|

||||

|

||||

// This camera pose will take a vector from this camera frame

|

||||

// to the world frame.

|

||||

Eigen::Isometry3d cam0_pose;

|

||||

cam0_pose.linear() = quaternionToRotation(

|

||||

cam_state_iter->second.orientation).transpose();

|

||||

cam0_pose.translation() = cam_state_iter->second.position;

|

||||

|

||||

Eigen::Isometry3d cam1_pose;

|

||||

cam1_pose = cam0_pose * CAMState::T_cam0_cam1.inverse();

|

||||

|

||||

cam_poses.push_back(cam0_pose);

|

||||

cam_poses.push_back(cam1_pose);

|

||||

}

|

||||

|

||||

// All camera poses should be modified such that it takes a

|

||||

// vector from the first camera frame in the buffer to this

|

||||

// camera frame.

|

||||

Eigen::Isometry3d T_c0_w = cam_poses[0];

|

||||

T_anchor_w = T_c0_w;

|

||||

for (auto& pose : cam_poses)

|

||||

pose = pose.inverse() * T_c0_w;

|

||||

|

||||

// Generate initial guess

|

||||

double initial_depth = 0;

|

||||

initial_depth = generateInitialDepth(cam_poses[cam_poses.size()-1], measurements[0],

|

||||

measurements[measurements.size()-1]);

|

||||

|

||||

double solution = 1.0/initial_depth;

|

||||

|

||||

// Apply Levenberg-Marquart method to solve for the 3d position.

|

||||

double lambda = optimization_config.initial_damping;

|

||||

int inner_loop_cntr = 0;

|

||||

int outer_loop_cntr = 0;

|

||||

bool is_cost_reduced = false;

|

||||

double delta_norm = 0;

|

||||

|

||||

// Compute the initial cost.

|

||||

double total_cost = 0.0;

|

||||

for (int i = 0; i < cam_poses.size(); ++i) {

|

||||

double this_cost = 0.0;

|

||||

Rhocost(cam_poses[i], solution, measurements[0], measurements[i], this_cost);

|

||||

total_cost += this_cost;

|

||||

}

|

||||

|

||||

// Outer loop.

|

||||

do {

|

||||

Eigen::Matrix<double, 1, 1> A = Eigen::Matrix<double, 1, 1>::Zero();

|

||||

Eigen::Matrix<double, 1, 1> b = Eigen::Matrix<double, 1, 1>::Zero();

|

||||

|

||||

for (int i = 0; i < cam_poses.size(); ++i) {

|

||||

Eigen::Matrix<double, 2, 1> J;

|

||||

Eigen::Vector2d r;

|

||||

double w;

|

||||

|

||||

RhoJacobian(cam_poses[i], solution, measurements[0], measurements[i], J, r, w);

|

||||

|

||||

if (w == 1) {

|

||||

A += J.transpose() * J;

|

||||

b += J.transpose() * r;

|

||||

} else {

|

||||

double w_square = w * w;

|

||||

A += w_square * J.transpose() * J;

|

||||

b += w_square * J.transpose() * r;

|

||||

}

|

||||

}

|

||||

|

||||

// Inner loop.

|

||||

// Solve for the delta that can reduce the total cost.

|

||||

do {

|

||||

Eigen::Matrix<double, 1, 1> damper = lambda*Eigen::Matrix<double, 1, 1>::Identity();

|

||||

Eigen::Matrix<double, 1, 1> delta = (A+damper).ldlt().solve(b);

|

||||

double new_solution = solution - delta(0,0);

|

||||

delta_norm = delta.norm();

|

||||

|

||||

double new_cost = 0.0;

|

||||

for (int i = 0; i < cam_poses.size(); ++i) {

|

||||

double this_cost = 0.0;

|

||||

Rhocost(cam_poses[i], new_solution, measurements[0], measurements[i], this_cost);

|

||||

new_cost += this_cost;

|

||||

}

|

||||

|

||||

if (new_cost < total_cost) {

|

||||

is_cost_reduced = true;

|

||||

solution = new_solution;

|

||||

total_cost = new_cost;

|

||||

lambda = lambda/10 > 1e-10 ? lambda/10 : 1e-10;

|

||||

} else {

|

||||

is_cost_reduced = false;

|

||||

lambda = lambda*10 < 1e12 ? lambda*10 : 1e12;

|

||||

}

|

||||

|

||||

} while (inner_loop_cntr++ <

|

||||

optimization_config.inner_loop_max_iteration && !is_cost_reduced);

|

||||

|

||||

inner_loop_cntr = 0;

|

||||

|

||||

} while (outer_loop_cntr++ <

|

||||

optimization_config.outer_loop_max_iteration &&

|

||||

delta_norm > optimization_config.estimation_precision);

|

||||

|

||||

// Covert the feature position from inverse depth

|

||||

// representation to its 3d coordinate.

|

||||

Eigen::Vector3d final_position(measurements[0](0)/solution,

|

||||

measurements[0](1)/solution, 1.0/solution);

|

||||

|

||||

// Check if the solution is valid. Make sure the feature

|

||||

// is in front of every camera frame observing it.

|

||||

bool is_valid_solution = true;

|

||||

for (const auto& pose : cam_poses) {

|

||||

Eigen::Vector3d position =

|

||||

pose.linear()*final_position + pose.translation();

|

||||

if (position(2) <= 0) {

|

||||

is_valid_solution = false;

|

||||

break;

|

||||

}

|

||||

}

|

||||

|

||||

//save inverse depth distance from camera

|

||||

anchor_rho = solution;

|

||||

|

||||

// Convert the feature position to the world frame.

|

||||

position = T_c0_w.linear()*final_position + T_c0_w.translation();

|

||||

|

||||

if (is_valid_solution)

|

||||

is_initialized = true;

|

||||

|

||||

return is_valid_solution;

|

||||

}

|

||||

|

||||

|

||||

bool Feature::initializePosition(const CamStateServer& cam_states) {

|

||||

|

||||

// Organize camera poses and feature observations properly.

|

||||

|

||||

std::vector<Eigen::Isometry3d,

|

||||

Eigen::aligned_allocator<Eigen::Isometry3d> > cam_poses(0);

|

||||

std::vector<Eigen::Vector2d,

|

||||

@ -982,6 +1417,7 @@ bool Feature::initializePosition(const CamStateServer& cam_states) {

|

||||

|

||||

//save inverse depth distance from camera

|

||||

anchor_rho = solution(2);

|

||||

std::cout << "from feature: " << anchor_rho << std::endl;

|

||||

|

||||

// Convert the feature position to the world frame.

|

||||

position = T_c0_w.linear()*final_position + T_c0_w.translation();

|

||||

|

||||

@ -16,6 +16,16 @@ namespace msckf_vio {

|

||||

*/

|

||||

namespace image_handler {

|

||||

|

||||

cv::Point2f pinholeDownProject(const cv::Point2f& p_in, const cv::Vec4d& intrinsics);

|

||||

cv::Point2f pinholeUpProject(const cv::Point2f& p_in, const cv::Vec4d& intrinsics);

|

||||

|

||||

void undistortImage(

|

||||

cv::InputArray src,

|

||||

cv::OutputArray dst,

|

||||

const std::string& distortion_model,

|

||||

const cv::Vec4d& intrinsics,

|

||||

const cv::Vec4d& distortion_coeffs);

|

||||

|

||||

void undistortPoints(

|

||||

const std::vector<cv::Point2f>& pts_in,

|

||||

const cv::Vec4d& intrinsics,

|

||||

|

||||

@ -320,6 +320,8 @@ private:

|

||||

return;

|

||||

}

|

||||

|

||||

bool STREAMPAUSE;

|

||||

|

||||

// Indicate if this is the first image message.

|

||||

bool is_first_img;

|

||||

|

||||

|

||||

@ -202,53 +202,111 @@ class MsckfVio {

|

||||

Eigen::Vector4d& r);

|

||||

// This function computes the Jacobian of all measurements viewed

|

||||

// in the given camera states of this feature.

|

||||

void featureJacobian(const FeatureIDType& feature_id,

|

||||

bool featureJacobian(

|

||||

const FeatureIDType& feature_id,

|

||||

const std::vector<StateIDType>& cam_state_ids,

|

||||

Eigen::MatrixXd& H_x, Eigen::VectorXd& r);

|

||||

|

||||

|

||||

void PhotometricMeasurementJacobian(

|

||||

const StateIDType& cam_state_id,

|

||||

const FeatureIDType& feature_id,

|

||||

Eigen::MatrixXd& H_x,

|

||||

Eigen::MatrixXd& H_y,

|

||||

Eigen::VectorXd& r);

|

||||

void twodotMeasurementJacobian(

|

||||

const StateIDType& cam_state_id,

|

||||

const FeatureIDType& feature_id,

|

||||

Eigen::MatrixXd& H_x, Eigen::MatrixXd& H_y, Eigen::VectorXd& r);

|

||||

|

||||

void PhotometricFeatureJacobian(

|

||||

const FeatureIDType& feature_id,

|

||||

const std::vector<StateIDType>& cam_state_ids,

|

||||

Eigen::MatrixXd& H_x, Eigen::VectorXd& r);

|

||||

bool ConstructJacobians(

|

||||

Eigen::MatrixXd& H_rho,

|

||||

Eigen::MatrixXd& H_pl,

|

||||

Eigen::MatrixXd& H_pA,

|

||||

const Feature& feature,

|

||||

const StateIDType& cam_state_id,

|

||||

Eigen::MatrixXd& H_xl,

|

||||

Eigen::MatrixXd& H_yl);

|

||||

|

||||

bool PhotometricPatchPointResidual(

|

||||

const StateIDType& cam_state_id,

|

||||

const Feature& feature,

|

||||

Eigen::VectorXd& r);

|

||||

|

||||

bool PhotometricPatchPointJacobian(

|

||||

const CAMState& cam_state,

|

||||

const StateIDType& cam_state_id,

|

||||

const Feature& feature,

|

||||

Eigen::Vector3d point,

|

||||

int count,

|

||||

Eigen::Matrix<double, 2, 1>& H_rhoj,

|

||||

Eigen::Matrix<double, 2, 6>& H_plj,

|

||||

Eigen::Matrix<double, 2, 6>& H_pAj,

|

||||

Eigen::Matrix<double, 2, 4>& dI_dhj);

|

||||

|

||||

bool PhotometricMeasurementJacobian(

|

||||

const StateIDType& cam_state_id,

|

||||

const FeatureIDType& feature_id,

|

||||

Eigen::MatrixXd& H_x,

|

||||

Eigen::MatrixXd& H_y,

|

||||

Eigen::VectorXd& r);

|

||||

|

||||

|

||||

bool twodotFeatureJacobian(

|

||||

const FeatureIDType& feature_id,

|

||||

const std::vector<StateIDType>& cam_state_ids,

|

||||

Eigen::MatrixXd& H_x, Eigen::VectorXd& r);

|

||||

|

||||

bool PhotometricFeatureJacobian(

|

||||

const FeatureIDType& feature_id,

|

||||

const std::vector<StateIDType>& cam_state_ids,

|

||||

Eigen::MatrixXd& H_x, Eigen::VectorXd& r);

|

||||

|

||||

void photometricMeasurementUpdate(const Eigen::MatrixXd& H, const Eigen::VectorXd& r);

|

||||

void measurementUpdate(const Eigen::MatrixXd& H,

|

||||

const Eigen::VectorXd& r);

|

||||

void twoMeasurementUpdate(const Eigen::MatrixXd& H, const Eigen::VectorXd& r);

|

||||

|

||||

bool gatingTest(const Eigen::MatrixXd& H,

|

||||

const Eigen::VectorXd&r, const int& dof);

|

||||

const Eigen::VectorXd&r, const int& dof, int filter=0);

|

||||

void removeLostFeatures();

|

||||

void findRedundantCamStates(

|

||||

std::vector<StateIDType>& rm_cam_state_ids);

|

||||

|

||||

void pruneLastCamStateBuffer();

|

||||

void pruneCamStateBuffer();

|

||||

// Reset the system online if the uncertainty is too large.

|

||||

void onlineReset();

|

||||

|

||||

// Photometry flag

|

||||

bool PHOTOMETRIC;

|

||||

int FILTER;

|

||||

|

||||

// debug flag

|

||||

bool STREAMPAUSE;

|

||||

bool PRINTIMAGES;

|

||||

bool GROUNDTRUTH;

|

||||

|

||||

bool nan_flag;

|

||||

bool play;

|

||||

double last_time_bound;

|

||||

double time_offset;

|

||||

|

||||

// Patch size for Photometry

|

||||

int N;

|

||||

|

||||

// Image rescale

|

||||

int SCALE;

|

||||

// Chi squared test table.

|

||||

static std::map<int, double> chi_squared_test_table;

|

||||

|

||||

double eval_time;

|

||||

|

||||

IMUState timed_old_imu_state;

|

||||

IMUState timed_old_true_state;

|

||||

|

||||

IMUState old_imu_state;

|

||||

IMUState old_true_state;

|

||||

|

||||

// change in position

|

||||

Eigen::Vector3d delta_position;

|

||||

Eigen::Vector3d delta_orientation;

|

||||

|

||||

// State vector

|

||||

StateServer state_server;

|

||||

StateServer photometric_state_server;

|

||||

|

||||

// Ground truth state vector

|

||||

StateServer true_state_server;

|

||||

@ -311,6 +369,7 @@ class MsckfVio {

|

||||

// Subscribers and publishers

|

||||

ros::Subscriber imu_sub;

|

||||

ros::Subscriber truth_sub;

|

||||

ros::Publisher truth_odom_pub;

|

||||

ros::Publisher odom_pub;

|

||||

ros::Publisher marker_pub;

|

||||

ros::Publisher feature_pub;

|

||||

|

||||

38

launch/image_processor_tinytum.launch

Normal file

38

launch/image_processor_tinytum.launch

Normal file

@ -0,0 +1,38 @@

|

||||

<launch>

|

||||

|

||||

<arg name="robot" default="firefly_sbx"/>

|

||||

<arg name="calibration_file"

|

||||

default="$(find msckf_vio)/config/camchain-imucam-tum-scaled.yaml"/>

|

||||

|

||||

<!-- Image Processor Nodelet -->

|

||||

<group ns="$(arg robot)">

|

||||

<node pkg="nodelet" type="nodelet" name="image_processor"

|

||||

args="standalone msckf_vio/ImageProcessorNodelet"

|

||||

output="screen"

|

||||

>

|

||||

|

||||

<!-- Debugging Flaggs -->

|

||||

<param name="StreamPause" value="true"/>

|

||||

|

||||

<rosparam command="load" file="$(arg calibration_file)"/>

|

||||

<param name="grid_row" value="4"/>

|

||||

<param name="grid_col" value="4"/>

|

||||

<param name="grid_min_feature_num" value="3"/>

|

||||

<param name="grid_max_feature_num" value="5"/>

|

||||

<param name="pyramid_levels" value="3"/>

|

||||

<param name="patch_size" value="15"/>

|

||||

<param name="fast_threshold" value="10"/>

|

||||

<param name="max_iteration" value="30"/>

|

||||

<param name="track_precision" value="0.01"/>

|

||||

<param name="ransac_threshold" value="3"/>

|

||||

<param name="stereo_threshold" value="5"/>

|

||||

|

||||

<remap from="~imu" to="/imu0"/>

|

||||

<remap from="~cam0_image" to="/cam0/image_raw"/>

|

||||

<remap from="~cam1_image" to="/cam1/image_raw"/>

|

||||

|

||||

|

||||

</node>

|

||||

</group>

|

||||

|

||||

</launch>

|

||||

@ -11,6 +11,9 @@

|

||||

output="screen"

|

||||

>

|

||||

|

||||

<!-- Debugging Flaggs -->

|

||||

<param name="StreamPause" value="true"/>

|

||||

|

||||

<rosparam command="load" file="$(arg calibration_file)"/>

|

||||

<param name="grid_row" value="4"/>

|

||||

<param name="grid_col" value="4"/>

|

||||

|

||||

@ -22,10 +22,10 @@

|

||||

<param name="PHOTOMETRIC" value="true"/>

|

||||

|

||||

<!-- Debugging Flaggs -->

|

||||

<param name="PrintImages" value="false"/>

|

||||

<param name="PrintImages" value="true"/>

|

||||

<param name="GroundTruth" value="false"/>

|

||||

|

||||

<param name="patch_size_n" value="7"/>

|

||||

<param name="patch_size_n" value="3"/>

|

||||

|

||||

<!-- Calibration parameters -->

|

||||

<rosparam command="load" file="$(arg calibration_file)"/>

|

||||

|

||||

@ -17,6 +17,18 @@

|

||||

args='standalone msckf_vio/MsckfVioNodelet'

|

||||

output="screen">

|

||||

|

||||

|

||||

<!-- Filter Flag, 0 = msckf, 1 = photometric, 2 = two -->

|

||||

<param name="FILTER" value="1"/>

|

||||

|

||||

<!-- Debugging Flaggs -->

|

||||

<param name="StreamPause" value="true"/>

|

||||

<param name="PrintImages" value="true"/>

|

||||

<param name="GroundTruth" value="false"/>

|

||||

|

||||

<param name="patch_size_n" value="5"/>

|

||||

<param name="image_scale" value ="1"/>

|

||||

|

||||

<!-- Calibration parameters -->

|

||||

<rosparam command="load" file="$(arg calibration_file)"/>

|

||||

|

||||

|

||||

77

launch/msckf_vio_tinytum.launch

Normal file

77

launch/msckf_vio_tinytum.launch

Normal file

@ -0,0 +1,77 @@

|

||||

<launch>

|

||||

|

||||

<arg name="robot" default="firefly_sbx"/>

|

||||

<arg name="fixed_frame_id" default="world"/>

|

||||

<arg name="calibration_file"

|

||||

default="$(find msckf_vio)/config/camchain-imucam-tum-scaled.yaml"/>

|

||||

|

||||

<!-- Image Processor Nodelet -->

|

||||

<include file="$(find msckf_vio)/launch/image_processor_tinytum.launch">

|

||||

<arg name="robot" value="$(arg robot)"/>

|

||||

<arg name="calibration_file" value="$(arg calibration_file)"/>

|

||||

</include>

|

||||

|

||||

<!-- Msckf Vio Nodelet -->

|

||||

<group ns="$(arg robot)">

|

||||

<node pkg="nodelet" type="nodelet" name="vio"

|

||||

args='standalone msckf_vio/MsckfVioNodelet'

|

||||

output="screen">

|

||||

|

||||

<param name="FILTER" value="0"/>

|

||||

|

||||

<!-- Debugging Flaggs -->

|

||||

<param name="StreamPause" value="true"/>

|

||||

<param name="PrintImages" value="false"/>

|

||||

<param name="GroundTruth" value="false"/>

|

||||

|

||||

<param name="patch_size_n" value="3"/>

|

||||

<param name="image_scale" value ="1"/>

|

||||

|

||||

<!-- Calibration parameters -->

|

||||

<rosparam command="load" file="$(arg calibration_file)"/>

|

||||

|

||||

<param name="publish_tf" value="true"/>

|

||||

<param name="frame_rate" value="20"/>

|

||||

<param name="fixed_frame_id" value="$(arg fixed_frame_id)"/>

|

||||

<param name="child_frame_id" value="odom"/>

|

||||

<param name="max_cam_state_size" value="12"/>

|

||||

<param name="position_std_threshold" value="8.0"/>

|

||||

|

||||

<param name="rotation_threshold" value="0.2618"/>

|

||||

<param name="translation_threshold" value="0.4"/>

|

||||

<param name="tracking_rate_threshold" value="0.5"/>

|

||||

|

||||

<!-- Feature optimization config -->

|

||||

<param name="feature/config/translation_threshold" value="-1.0"/>

|

||||

|

||||

<!-- These values should be standard deviation -->

|

||||

<param name="noise/gyro" value="0.005"/>

|

||||

<param name="noise/acc" value="0.05"/>

|

||||

<param name="noise/gyro_bias" value="0.001"/>

|

||||

<param name="noise/acc_bias" value="0.01"/>

|

||||

<param name="noise/feature" value="0.035"/>

|

||||

|

||||

<param name="initial_state/velocity/x" value="0.0"/>

|

||||

<param name="initial_state/velocity/y" value="0.0"/>

|

||||

<param name="initial_state/velocity/z" value="0.0"/>

|

||||

|

||||

<!-- These values should be covariance -->

|

||||

<param name="initial_covariance/velocity" value="0.25"/>

|

||||

<param name="initial_covariance/gyro_bias" value="0.01"/>

|

||||

<param name="initial_covariance/acc_bias" value="0.01"/>

|

||||

<param name="initial_covariance/extrinsic_rotation_cov" value="3.0462e-4"/>

|

||||

<param name="initial_covariance/extrinsic_translation_cov" value="2.5e-5"/>

|

||||

<param name="initial_covariance/irradiance_frame_bias" value="0.1"/>

|

||||

|

||||